概述

构建智能体(或任何LLM应用)的难点在于使其足够可靠。虽然它们可能在原型中工作,但在实际使用案例中常常失败。智能体为何失败?

当智能体失败时,通常是因为智能体内部的LLM调用采取了错误的行动/没有达到我们的预期。LLM失败的原因有两个:- 底层LLM能力不足

- 没有将”正确的”上下文传递给LLM

刚接触上下文工程?请从概念概述开始,了解不同类型的上下文及其使用时机。

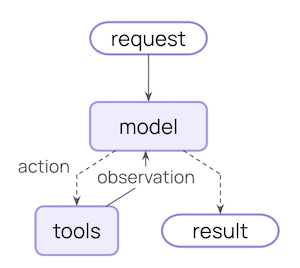

智能体循环

典型的智能体循环包括两个主要步骤:- 模型调用 - 使用提示和可用工具调用LLM,返回响应或执行工具的请求

- 工具执行 - 执行LLM请求的工具,返回工具结果

您可以控制的内容

为了构建可靠的智能体,您需要控制智能体循环每一步发生的情况,以及步骤之间发生的情况。瞬态上下文

LLM单次调用所看到的内容。您可以在不改变状态中保存内容的情况下修改消息、工具或提示。

持久上下文

跨轮次保存在状态中的内容。生命周期钩子和工具写入会永久修改此内容。

数据源

在整个过程中,您的智能体访问(读取/写入)不同的数据源:| 数据源 | 亦称 | 范围 | 示例 |

|---|---|---|---|

| 运行时上下文 | 静态配置 | 会话范围 | 用户ID、API密钥、数据库连接、权限、环境设置 |

| 状态 | 短期记忆 | 会话范围 | 当前消息、上传的文件、认证状态、工具结果 |

| 存储 | 长期记忆 | 跨会话 | 用户偏好、提取的见解、记忆、历史数据 |

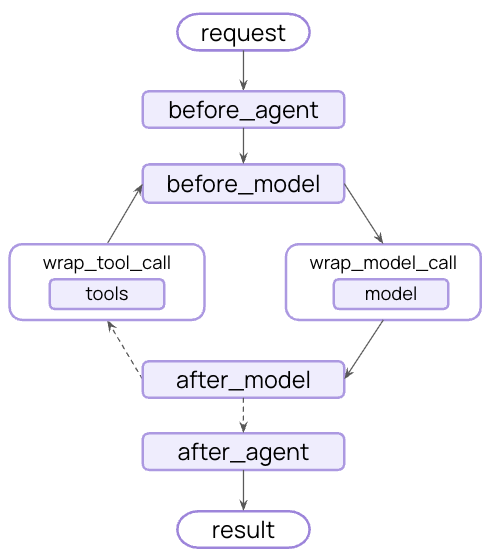

工作原理

LangChain的中间件是底层机制,使得使用LangChain的开发者能够实际进行上下文工程。 中间件允许您钩入智能体生命周期的任何步骤,并:- 更新上下文

- 跳转到智能体生命周期中的不同步骤

模型上下文

控制每次模型调用的输入内容 - 指令、可用工具、使用哪个模型以及输出格式。这些决策直接影响可靠性和成本。 所有这些类型的模型上下文都可以从状态(短期记忆)、存储(长期记忆)或运行时上下文(静态配置)中获取。系统提示

系统提示设置LLM的行为和能力。不同的用户、上下文或对话阶段需要不同的指令。成功的智能体利用记忆、偏好和配置来为对话的当前状态提供正确的指令。- 状态

- 存储

- 运行时上下文

从状态访问消息计数或对话上下文:

Copy

from langchain.agents import create_agent

from langchain.agents.middleware import dynamic_prompt, ModelRequest

@dynamic_prompt

def state_aware_prompt(request: ModelRequest) -> str:

# request.messages is a shortcut for request.state["messages"]

message_count = len(request.messages)

base = "You are a helpful assistant."

if message_count > 10:

base += "\nThis is a long conversation - be extra concise."

return base

agent = create_agent(

model="openai:gpt-4o",

tools=[...],

middleware=[state_aware_prompt]

)

从长期记忆中访问用户偏好:

Copy

from dataclasses import dataclass

from langchain.agents import create_agent

from langchain.agents.middleware import dynamic_prompt, ModelRequest

from langgraph.store.memory import InMemoryStore

@dataclass

class Context:

user_id: str

@dynamic_prompt

def store_aware_prompt(request: ModelRequest) -> str:

user_id = request.runtime.context.user_id

# Read from Store: get user preferences

store = request.runtime.store

user_prefs = store.get(("preferences",), user_id)

base = "You are a helpful assistant."

if user_prefs:

style = user_prefs.value.get("communication_style", "balanced")

base += f"\nUser prefers {style} responses."

return base

agent = create_agent(

model="openai:gpt-4o",

tools=[...],

middleware=[store_aware_prompt],

context_schema=Context,

store=InMemoryStore()

)

从运行时上下文访问用户ID或配置:

Copy

from dataclasses import dataclass

from langchain.agents import create_agent

from langchain.agents.middleware import dynamic_prompt, ModelRequest

@dataclass

class Context:

user_role: str

deployment_env: str

@dynamic_prompt

def context_aware_prompt(request: ModelRequest) -> str:

# Read from Runtime Context: user role and environment

user_role = request.runtime.context.user_role

env = request.runtime.context.deployment_env

base = "You are a helpful assistant."

if user_role == "admin":

base += "\nYou have admin access. You can perform all operations."

elif user_role == "viewer":

base += "\nYou have read-only access. Guide users to read operations only."

if env == "production":

base += "\nBe extra careful with any data modifications."

return base

agent = create_agent(

model="openai:gpt-4o",

tools=[...],

middleware=[context_aware_prompt],

context_schema=Context

)

消息

消息构成了发送给LLM的提示。 管理消息的内容对于确保LLM拥有正确的信息以良好响应至关重要。- 状态

- 存储

- 运行时上下文

当与当前查询相关时,从状态注入上传的文件上下文:

Copy

from langchain.agents import create_agent

from langchain.agents.middleware import wrap_model_call, ModelRequest, ModelResponse

from typing import Callable

@wrap_model_call

def inject_file_context(

request: ModelRequest,

handler: Callable[[ModelRequest], ModelResponse]

) -> ModelResponse:

"""Inject context about files user has uploaded this session."""

# Read from State: get uploaded files metadata

uploaded_files = request.state.get("uploaded_files", [])

if uploaded_files:

# Build context about available files

file_descriptions = []

for file in uploaded_files:

file_descriptions.append(

f"- {file['name']} ({file['type']}): {file['summary']}"

)

file_context = f"""Files you have access to in this conversation:

{chr(10).join(file_descriptions)}

Reference these files when answering questions."""

# Inject file context before recent messages

messages = [

*request.messages

{"role": "user", "content": file_context},

]

request = request.override(messages=messages)

return handler(request)

agent = create_agent(

model="openai:gpt-4o",

tools=[...],

middleware=[inject_file_context]

)

从存储中注入用户的电子邮件写作风格以指导草稿撰写:

Copy

from dataclasses import dataclass

from langchain.agents import create_agent

from langchain.agents.middleware import wrap_model_call, ModelRequest, ModelResponse

from typing import Callable

from langgraph.store.memory import InMemoryStore

@dataclass

class Context:

user_id: str

@wrap_model_call

def inject_writing_style(

request: ModelRequest,

handler: Callable[[ModelRequest], ModelResponse]

) -> ModelResponse:

"""Inject user's email writing style from Store."""

user_id = request.runtime.context.user_id

# Read from Store: get user's writing style examples

store = request.runtime.store

writing_style = store.get(("writing_style",), user_id)

if writing_style:

style = writing_style.value

# Build style guide from stored examples

style_context = f"""Your writing style:

- Tone: {style.get('tone', 'professional')}

- Typical greeting: "{style.get('greeting', 'Hi')}"

- Typical sign-off: "{style.get('sign_off', 'Best')}"

- Example email you've written:

{style.get('example_email', '')}"""

# Append at end - models pay more attention to final messages

messages = [

*request.messages,

{"role": "user", "content": style_context}

]

request = request.override(messages=messages)

return handler(request)

agent = create_agent(

model="openai:gpt-4o",

tools=[...],

middleware=[inject_writing_style],

context_schema=Context,

store=InMemoryStore()

)

根据用户的管辖区域从运行时上下文注入合规规则:

Copy

from dataclasses import dataclass

from langchain.agents import create_agent

from langchain.agents.middleware import wrap_model_call, ModelRequest, ModelResponse

from typing import Callable

@dataclass

class Context:

user_jurisdiction: str

industry: str

compliance_frameworks: list[str]

@wrap_model_call

def inject_compliance_rules(

request: ModelRequest,

handler: Callable[[ModelRequest], ModelResponse]

) -> ModelResponse:

"""Inject compliance constraints from Runtime Context."""

# Read from Runtime Context: get compliance requirements

jurisdiction = request.runtime.context.user_jurisdiction

industry = request.runtime.context.industry

frameworks = request.runtime.context.compliance_frameworks

# Build compliance constraints

rules = []

if "GDPR" in frameworks:

rules.append("- Must obtain explicit consent before processing personal data")

rules.append("- Users have right to data deletion")

if "HIPAA" in frameworks:

rules.append("- Cannot share patient health information without authorization")

rules.append("- Must use secure, encrypted communication")

if industry == "finance":

rules.append("- Cannot provide financial advice without proper disclaimers")

if rules:

compliance_context = f"""Compliance requirements for {jurisdiction}:

{chr(10).join(rules)}"""

# Append at end - models pay more attention to final messages

messages = [

*request.messages,

{"role": "user", "content": compliance_context}

]

request = request.override(messages=messages)

return handler(request)

agent = create_agent(

model="openai:gpt-4o",

tools=[...],

middleware=[inject_compliance_rules],

context_schema=Context

)

工具

工具让模型能够与数据库、API和外部系统交互。您定义和选择工具的方式直接影响模型能否有效完成任务。定义工具

每个工具都需要清晰的名称、描述、参数名称和参数描述。这些不仅仅是元数据 - 它们指导模型关于何时以及如何使用工具的推理。Copy

from langchain.tools import tool

@tool(parse_docstring=True)

def search_orders(

user_id: str,

status: str,

limit: int = 10

) -> str:

"""Search for user orders by status.

Use this when the user asks about order history or wants to check

order status. Always filter by the provided status.

Args:

user_id: Unique identifier for the user

status: Order status: 'pending', 'shipped', or 'delivered'

limit: Maximum number of results to return

"""

# Implementation here

pass

选择工具

并非每个工具都适用于所有情况。工具过多可能会使模型不堪重负(上下文过载)并增加错误;工具过少则会限制能力。动态工具选择基于认证状态、用户权限、功能标志或对话阶段来调整可用工具集。- 状态

- 存储

- 运行时上下文

仅在达到特定对话里程碑后启用高级工具:

Copy

from langchain.agents import create_agent

from langchain.agents.middleware import wrap_model_call, ModelRequest, ModelResponse

from typing import Callable

@wrap_model_call

def state_based_tools(

request: ModelRequest,

handler: Callable[[ModelRequest], ModelResponse]

) -> ModelResponse:

"""Filter tools based on conversation State."""

# Read from State: check if user has authenticated

state = request.state

is_authenticated = state.get("authenticated", False)

message_count = len(state["messages"])

# Only enable sensitive tools after authentication

if not is_authenticated:

tools = [t for t in request.tools if t.name.startswith("public_")]

request = request.override(tools=tools)

elif message_count < 5:

# Limit tools early in conversation

tools = [t for t in request.tools if t.name != "advanced_search"]

request = request.override(tools=tools)

return handler(request)

agent = create_agent(

model="openai:gpt-4o",

tools=[public_search, private_search, advanced_search],

middleware=[state_based_tools]

)

基于存储中的用户偏好或功能标志过滤工具:

Copy

from dataclasses import dataclass

from langchain.agents import create_agent

from langchain.agents.middleware import wrap_model_call, ModelRequest, ModelResponse

from typing import Callable

from langgraph.store.memory import InMemoryStore

@dataclass

class Context:

user_id: str

@wrap_model_call

def store_based_tools(

request: ModelRequest,

handler: Callable[[ModelRequest], ModelResponse]

) -> ModelResponse:

"""Filter tools based on Store preferences."""

user_id = request.runtime.context.user_id

# Read from Store: get user's enabled features

store = request.runtime.store

feature_flags = store.get(("features",), user_id)

if feature_flags:

enabled_features = feature_flags.value.get("enabled_tools", [])

# Only include tools that are enabled for this user

tools = [t for t in request.tools if t.name in enabled_features]

request = request.override(tools=tools)

return handler(request)

agent = create_agent(

model="openai:gpt-4o",

tools=[search_tool, analysis_tool, export_tool],

middleware=[store_based_tools],

context_schema=Context,

store=InMemoryStore()

)

基于运行时上下文中的用户权限过滤工具:

Copy

from dataclasses import dataclass

from langchain.agents import create_agent

from langchain.agents.middleware import wrap_model_call, ModelRequest, ModelResponse

from typing import Callable

@dataclass

class Context:

user_role: str

@wrap_model_call

def context_based_tools(

request: ModelRequest,

handler: Callable[[ModelRequest], ModelResponse]

) -> ModelResponse:

"""Filter tools based on Runtime Context permissions."""

# Read from Runtime Context: get user role

user_role = request.runtime.context.user_role

if user_role == "admin":

# Admins get all tools

pass

elif user_role == "editor":

# Editors can't delete

tools = [t for t in request.tools if t.name != "delete_data"]

request = request.override(tools=tools)

else:

# Viewers get read-only tools

tools = [t for t in request.tools if t.name.startswith("read_")]

request = request.override(tools=tools)

return handler(request)

agent = create_agent(

model="openai:gpt-4o",

tools=[read_data, write_data, delete_data],

middleware=[context_based_tools],

context_schema=Context

)

模型

不同的模型具有不同的优势、成本和上下文窗口。为当前任务选择合适的模型,这可能在智能体运行期间发生变化。- 状态

- 存储

- 运行时上下文

基于状态中的对话长度使用不同的模型:

Copy

from langchain.agents import create_agent

from langchain.agents.middleware import wrap_model_call, ModelRequest, ModelResponse

from langchain.chat_models import init_chat_model

from typing import Callable

# Initialize models once outside the middleware

large_model = init_chat_model("anthropic:claude-sonnet-4-5")

standard_model = init_chat_model("openai:gpt-4o")

efficient_model = init_chat_model("openai:gpt-4o-mini")

@wrap_model_call

def state_based_model(

request: ModelRequest,

handler: Callable[[ModelRequest], ModelResponse]

) -> ModelResponse:

"""Select model based on State conversation length."""

# request.messages is a shortcut for request.state["messages"]

message_count = len(request.messages)

if message_count > 20:

# Long conversation - use model with larger context window

model = large_model

elif message_count > 10:

# Medium conversation

model = standard_model

else:

# Short conversation - use efficient model

model = efficient_model

request = request.override(model=model)

return handler(request)

agent = create_agent(

model="openai:gpt-4o-mini",

tools=[...],

middleware=[state_based_model]

)

使用存储中用户偏好的模型:

Copy

from dataclasses import dataclass

from langchain.agents import create_agent

from langchain.agents.middleware import wrap_model_call, ModelRequest, ModelResponse

from langchain.chat_models import init_chat_model

from typing import Callable

from langgraph.store.memory import InMemoryStore

@dataclass

class Context:

user_id: str

# Initialize available models once

MODEL_MAP = {

"gpt-4o": init_chat_model("openai:gpt-4o"),

"gpt-4o-mini": init_chat_model("openai:gpt-4o-mini"),

"claude-sonnet": init_chat_model("anthropic:claude-sonnet-4-5"),

}

@wrap_model_call

def store_based_model(

request: ModelRequest,

handler: Callable[[ModelRequest], ModelResponse]

) -> ModelResponse:

"""Select model based on Store preferences."""

user_id = request.runtime.context.user_id

# Read from Store: get user's preferred model

store = request.runtime.store

user_prefs = store.get(("preferences",), user_id)

if user_prefs:

preferred_model = user_prefs.value.get("preferred_model")

if preferred_model and preferred_model in MODEL_MAP:

request = request.override(model=MODEL_MAP[preferred_model])

return handler(request)

agent = create_agent(

model="openai:gpt-4o",

tools=[...],

middleware=[store_based_model],

context_schema=Context,

store=InMemoryStore()

)

基于运行时上下文中的成本限制或环境选择模型:

Copy

from dataclasses import dataclass

from langchain.agents import create_agent

from langchain.agents.middleware import wrap_model_call, ModelRequest, ModelResponse

from langchain.chat_models import init_chat_model

from typing import Callable

@dataclass

class Context:

cost_tier: str

environment: str

# Initialize models once outside the middleware

premium_model = init_chat_model("anthropic:claude-sonnet-4-5")

standard_model = init_chat_model("openai:gpt-4o")

budget_model = init_chat_model("openai:gpt-4o-mini")

@wrap_model_call

def context_based_model(

request: ModelRequest,

handler: Callable[[ModelRequest], ModelResponse]

) -> ModelResponse:

"""Select model based on Runtime Context."""

# Read from Runtime Context: cost tier and environment

cost_tier = request.runtime.context.cost_tier

environment = request.runtime.context.environment

if environment == "production" and cost_tier == "premium":

# Production premium users get best model

model = premium_model

elif cost_tier == "budget":

# Budget tier gets efficient model

model = budget_model

else:

# Standard tier

model = standard_model

request = request.override(model=model)

return handler(request)

agent = create_agent(

model="openai:gpt-4o",

tools=[...],

middleware=[context_based_model],

context_schema=Context

)

响应格式

结构化输出将非结构化文本转换为经过验证的结构化数据。当提取特定字段或为下游系统返回数据时,自由格式的文本是不够的。 工作原理: 当您提供模式作为响应格式时,模型的最终响应保证符合该模式。智能体运行模型/工具调用循环,直到模型完成工具调用,然后将最终响应强制转换为提供的格式。定义格式

模式定义指导模型。字段名称、类型和描述确切指定输出应遵循的格式。Copy

from pydantic import BaseModel, Field

class CustomerSupportTicket(BaseModel):

"""Structured ticket information extracted from customer message."""

category: str = Field(

description="Issue category: 'billing', 'technical', 'account', or 'product'"

)

priority: str = Field(

description="Urgency level: 'low', 'medium', 'high', or 'critical'"

)

summary: str = Field(

description="One-sentence summary of the customer's issue"

)

customer_sentiment: str = Field(

description="Customer's emotional tone: 'frustrated', 'neutral', or 'satisfied'"

)

选择格式

动态响应格式选择基于用户偏好、对话阶段或角色调整模式 - 早期返回简单格式,随着复杂性增加返回详细格式。- 状态

- 存储

- 运行时上下文

基于对话状态配置结构化输出:

Copy

from langchain.agents import create_agent

from langchain.agents.middleware import wrap_model_call, ModelRequest, ModelResponse

from pydantic import BaseModel, Field

from typing import Callable

class SimpleResponse(BaseModel):

"""Simple response for early conversation."""

answer: str = Field(description="A brief answer")

class DetailedResponse(BaseModel):

"""Detailed response for established conversation."""

answer: str = Field(description="A detailed answer")

reasoning: str = Field(description="Explanation of reasoning")

confidence: float = Field(description="Confidence score 0-1")

@wrap_model_call

def state_based_output(

request: ModelRequest,

handler: Callable[[ModelRequest], ModelResponse]

) -> ModelResponse:

"""Select output format based on State."""

# request.messages is a shortcut for request.state["messages"]

message_count = len(request.messages)

if message_count < 3:

# Early conversation - use simple format

request = request.override(response_format=SimpleResponse)

else:

# Established conversation - use detailed format

request = request.override(response_format=DetailedResponse)

return handler(request)

agent = create_agent(

model="openai:gpt-4o",

tools=[...],

middleware=[state_based_output]

)

基于存储中的用户偏好配置输出格式:

Copy

from dataclasses import dataclass

from langchain.agents import create_agent

from langchain.agents.middleware import wrap_model_call, ModelRequest, ModelResponse

from pydantic import BaseModel, Field

from typing import Callable

from langgraph.store.memory import InMemoryStore

@dataclass

class Context:

user_id: str

class VerboseResponse(BaseModel):

"""Verbose response with details."""

answer: str = Field(description="Detailed answer")

sources: list[str] = Field(description="Sources used")

class ConciseResponse(BaseModel):

"""Concise response."""

answer: str = Field(description="Brief answer")

@wrap_model_call

def store_based_output(

request: ModelRequest,

handler: Callable[[ModelRequest], ModelResponse]

) -> ModelResponse:

"""Select output format based on Store preferences."""

user_id = request.runtime.context.user_id

# Read from Store: get user's preferred response style

store = request.runtime.store

user_prefs = store.get(("preferences",), user_id)

if user_prefs:

style = user_prefs.value.get("response_style", "concise")

if style == "verbose":

request = request.override(response_format=VerboseResponse)

else:

request = request.override(response_format=ConciseResponse)

return handler(request)

agent = create_agent(

model="openai:gpt-4o",

tools=[...],

middleware=[store_based_output],

context_schema=Context,

store=InMemoryStore()

)

基于运行时上下文(如用户角色或环境)配置输出格式:

Copy

from dataclasses import dataclass

from langchain.agents import create_agent

from langchain.agents.middleware import wrap_model_call, ModelRequest, ModelResponse

from pydantic import BaseModel, Field

from typing import Callable

@dataclass

class Context:

user_role: str

environment: str

class AdminResponse(BaseModel):

"""Response with technical details for admins."""

answer: str = Field(description="Answer")

debug_info: dict = Field(description="Debug information")

system_status: str = Field(description="System status")

class UserResponse(BaseModel):

"""Simple response for regular users."""

answer: str = Field(description="Answer")

@wrap_model_call

def context_based_output(

request: ModelRequest,

handler: Callable[[ModelRequest], ModelResponse]

) -> ModelResponse:

"""Select output format based on Runtime Context."""

# Read from Runtime Context: user role and environment

user_role = request.runtime.context.user_role

environment = request.runtime.context.environment

if user_role == "admin" and environment == "production":

# Admins in production get detailed output

request = request.override(response_format=AdminResponse)

else:

# Regular users get simple output

request = request.override(response_format=UserResponse)

return handler(request)

agent = create_agent(

model="openai:gpt-4o",

tools=[...],

middleware=[context_based_output],

context_schema=Context

)

工具上下文

工具的特殊之处在于它们既读取又写入上下文。 在最基本的情况下,当工具执行时,它接收LLM的请求参数并返回工具消息。工具完成其工作并产生结果。 工具还可以为模型获取重要信息,使其能够执行和完成任务。读取

大多数现实世界的工具需要的不仅仅是LLM的参数。它们需要用户ID用于数据库查询、API密钥用于外部服务或当前会话状态以做出决策。工具从状态、存储和运行时上下文读取以访问此信息。- 状态

- 存储

- 运行时上下文

从状态读取以检查当前会话信息:

Copy

from langchain.tools import tool, ToolRuntime

from langchain.agents import create_agent

@tool

def check_authentication(

runtime: ToolRuntime

) -> str:

"""Check if user is authenticated."""

# Read from State: check current auth status

current_state = runtime.state

is_authenticated = current_state.get("authenticated", False)

if is_authenticated:

return "User is authenticated"

else:

return "User is not authenticated"

agent = create_agent(

model="openai:gpt-4o",

tools=[check_authentication]

)

从存储读取以访问持久化的用户偏好:

Copy

from dataclasses import dataclass

from langchain.tools import tool, ToolRuntime

from langchain.agents import create_agent

from langgraph.store.memory import InMemoryStore

@dataclass

class Context:

user_id: str

@tool

def get_preference(

preference_key: str,

runtime: ToolRuntime[Context]

) -> str:

"""Get user preference from Store."""

user_id = runtime.context.user_id

# Read from Store: get existing preferences

store = runtime.store

existing_prefs = store.get(("preferences",), user_id)

if existing_prefs:

value = existing_prefs.value.get(preference_key)

return f"{preference_key}: {value}" if value else f"No preference set for {preference_key}"

else:

return "No preferences found"

agent = create_agent(

model="openai:gpt-4o",

tools=[get_preference],

context_schema=Context,

store=InMemoryStore()

)

从运行时上下文读取配置,如API密钥和用户ID:

Copy

from dataclasses import dataclass

from langchain.tools import tool, ToolRuntime

from langchain.agents import create_agent

@dataclass

class Context:

user_id: str

api_key: str

db_connection: str

@tool

def fetch_user_data(

query: str,

runtime: ToolRuntime[Context]

) -> str:

"""Fetch data using Runtime Context configuration."""

# Read from Runtime Context: get API key and DB connection

user_id = runtime.context.user_id

api_key = runtime.context.api_key

db_connection = runtime.context.db_connection

# Use configuration to fetch data

results = perform_database_query(db_connection, query, api_key)

return f"Found {len(results)} results for user {user_id}"

agent = create_agent(

model="openai:gpt-4o",

tools=[fetch_user_data],

context_schema=Context

)

# Invoke with runtime context

result = agent.invoke(

{"messages": [{"role": "user", "content": "Get my data"}]},

context=Context(

user_id="user_123",

api_key="sk-...",

db_connection="postgresql://..."

)

)

写入

工具结果可用于帮助智能体完成给定任务。工具既可以直接将结果返回给模型,也可以更新智能体的记忆,使重要上下文可用于未来步骤。- 状态

- 存储

使用Command写入状态以跟踪会话特定信息:

Copy

from langchain.tools import tool, ToolRuntime

from langchain.agents import create_agent

from langgraph.types import Command

@tool

def authenticate_user(

password: str,

runtime: ToolRuntime

) -> Command:

"""Authenticate user and update State."""

# Perform authentication (simplified)

if password == "correct":

# Write to State: mark as authenticated using Command

return Command(

update={"authenticated": True},

)

else:

return Command(update={"authenticated": False})

agent = create_agent(

model="openai:gpt-4o",

tools=[authenticate_user]

)

写入存储以跨会话持久化数据:

Copy

from dataclasses import dataclass

from langchain.tools import tool, ToolRuntime

from langchain.agents import create_agent

from langgraph.store.memory import InMemoryStore

@dataclass

class Context:

user_id: str

@tool

def save_preference(

preference_key: str,

preference_value: str,

runtime: ToolRuntime[Context]

) -> str:

"""Save user preference to Store."""

user_id = runtime.context.user_id

# Read existing preferences

store = runtime.store

existing_prefs = store.get(("preferences",), user_id)

# Merge with new preference

prefs = existing_prefs.value if existing_prefs else {}

prefs[preference_key] = preference_value

# Write to Store: save updated preferences

store.put(("preferences",), user_id, prefs)

return f"Saved preference: {preference_key} = {preference_value}"

agent = create_agent(

model="openai:gpt-4o",

tools=[save_preference],

context_schema=Context,

store=InMemoryStore()

)

生命周期上下文

控制核心智能体步骤之间发生的情况 - 拦截数据流以实现横切关注点,如摘要、防护栏和日志记录。 正如您在模型上下文和工具上下文中所见,中间件是使上下文工程切实可行的机制。中间件允许您钩入智能体生命周期中的任何步骤,并:- 更新上下文 - 修改状态和存储以持久化更改、更新对话历史或保存见解

- 在生命周期中跳转 - 基于上下文移动到智能体循环中的不同步骤(例如,如果满足条件则跳过工具执行,使用修改后的上下文重复模型调用)

示例:摘要

最常见的生命周期模式之一是在对话历史过长时自动压缩。与模型上下文中显示的瞬态消息修剪不同,摘要持久更新状态 - 永久用摘要替换旧消息,该摘要将保存用于所有未来轮次。 LangChain为此提供了内置中间件:Copy

from langchain.agents import create_agent

from langchain.agents.middleware import SummarizationMiddleware

agent = create_agent(

model="openai:gpt-4o",

tools=[...],

middleware=[

SummarizationMiddleware(

model="openai:gpt-4o-mini",

max_tokens_before_summary=4000, # 在4000个标记时触发摘要

messages_to_keep=20, # 摘要后保留最后20条消息

),

],

)

SummarizationMiddleware 自动:

- 使用单独的LLM调用汇总较早的消息

- 用状态中的摘要消息替换它们(永久)

- 保留最近的消息以保持上下文

有关内置中间件的完整列表、可用钩子以及如何创建自定义中间件,请参阅中间件文档。

最佳实践

- 从简单开始 - 从静态提示和工具开始,仅在需要时添加动态功能

- 增量测试 - 一次添加一个上下文工程功能

- 监控性能 - 跟踪模型调用、标记使用和延迟

- 使用内置中间件 - 利用

SummarizationMiddleware、LLMToolSelectorMiddleware等 - 记录您的上下文策略 - 清晰说明正在传递什么上下文以及原因

- 理解瞬态与持久:模型上下文更改是瞬态的(每次调用),而生命周期上下文更改持久保存到状态

相关资源

Connect these docs programmatically to Claude, VSCode, and more via MCP for real-time answers.